Optimize AWS Storage Costs with Amazon S3 Lifecycle Configurations

Data is the lifeblood of any business. Data is used to make decisions, drive innovation, and serve customers. But data can also be expensive to store at scale in the cloud. That's where storage lifecycle configurations come in. An Amazon s3 lifecycle configuration is a set of rules that define

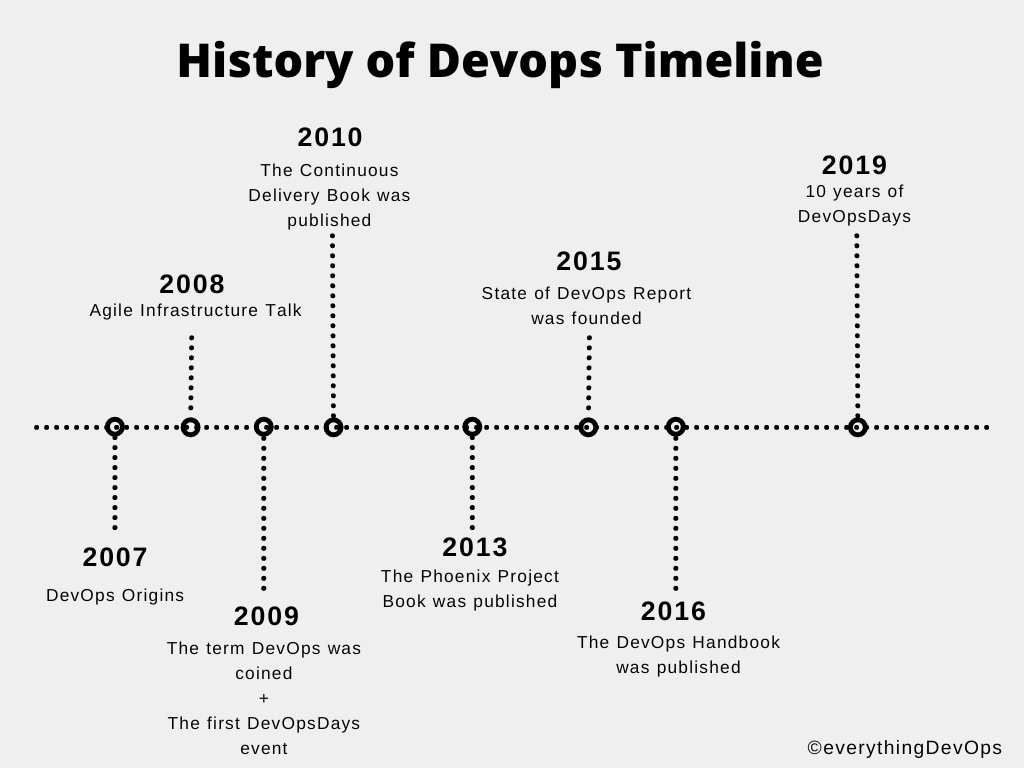

A Brief History of DevOps and Its Impact on Software Development

Software development has been and continues to be one of our society's most important building blocks. Software development has gifted us with the mobile phones we use to stay connected, the rockets we send to space and a host of other great innovations. As complex as these innovations become, the

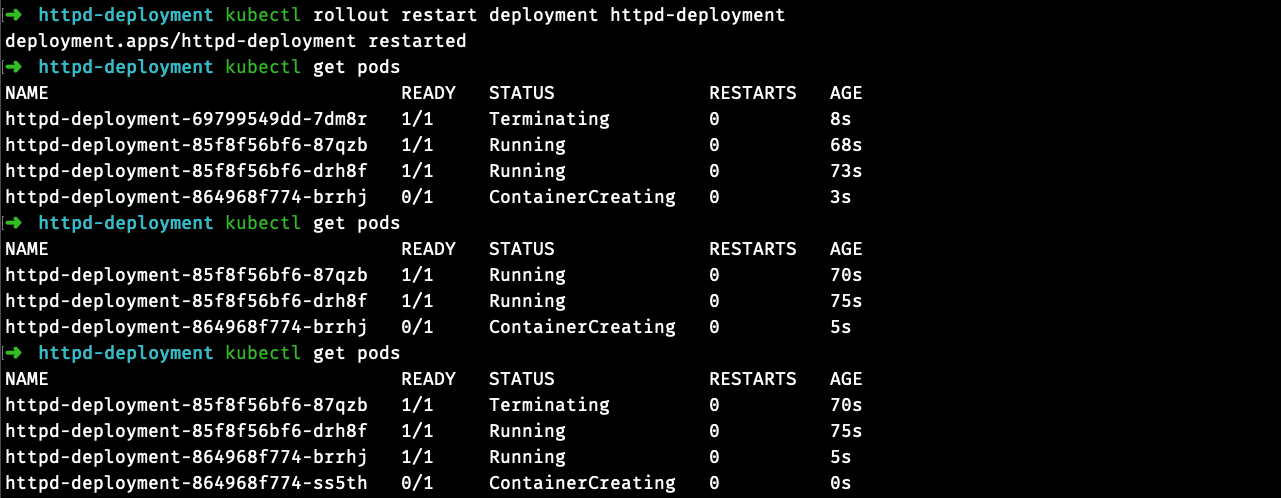

How to restart Kubernetes Pods with kubectl

Anyone who has used Kubernetes for an extended period of time will know that things don’t always go as smoothly as you’d like. In production, unexpected things happen, and Pods can crash or fail in some unforeseen way. When this happens, you need a reliable way to restart

How to Deploy a Multi Container Docker Compose Application On Amazon EC2

Container technology streamlined how you’d build, test, and deploy software from local environments to the cloud or on-premise data centers. But with the benefit of building applications with container technology, there was the problem of manually starting and stopping each container while building multi-container applications. To solve this problem,

How to Set Environment Variables on a Linux Machine

When building software, you start in a development environment (your local computer). You then move to another environment(s) (Staging, QA, etc.), and finally, the production environment where users can use the application. While moving through each of these environments, there may be some configuration options that will be different.

How to avoid merge commits when syncing a fork

Whenever you work on open source projects, you usually maintain your copy (a fork) of the original codebase. To propose changes, you open up a Pull Request (PR). After you create a PR, there are chances that during its review process, commits will be made to the original codebase, which

Building x86 Images on an Apple M1 Chip

A few months ago, while deploying an application in Amazon Elastic Kubernetes Service (EKS), my pods crashed with a standard_init_linux.go:228: exec user process caused: exec format error error. After a bit of research, I found out that the error tends to happen when the architecture an

Kubernetes Architecture Explained: Worker Nodes in a Cluster

When you deploy Kubernetes, you get a cluster. And the cluster you get upon deployment would consist of one or more worker machines (virtual or physical) called nodes for you to run your containerized applications in pods. For each worker node to run containerized applications, it must contain a container